Migas 1.5

The first foundation model to fuse text and time series — up to 14% more accurate than state-of-the-art baselines.

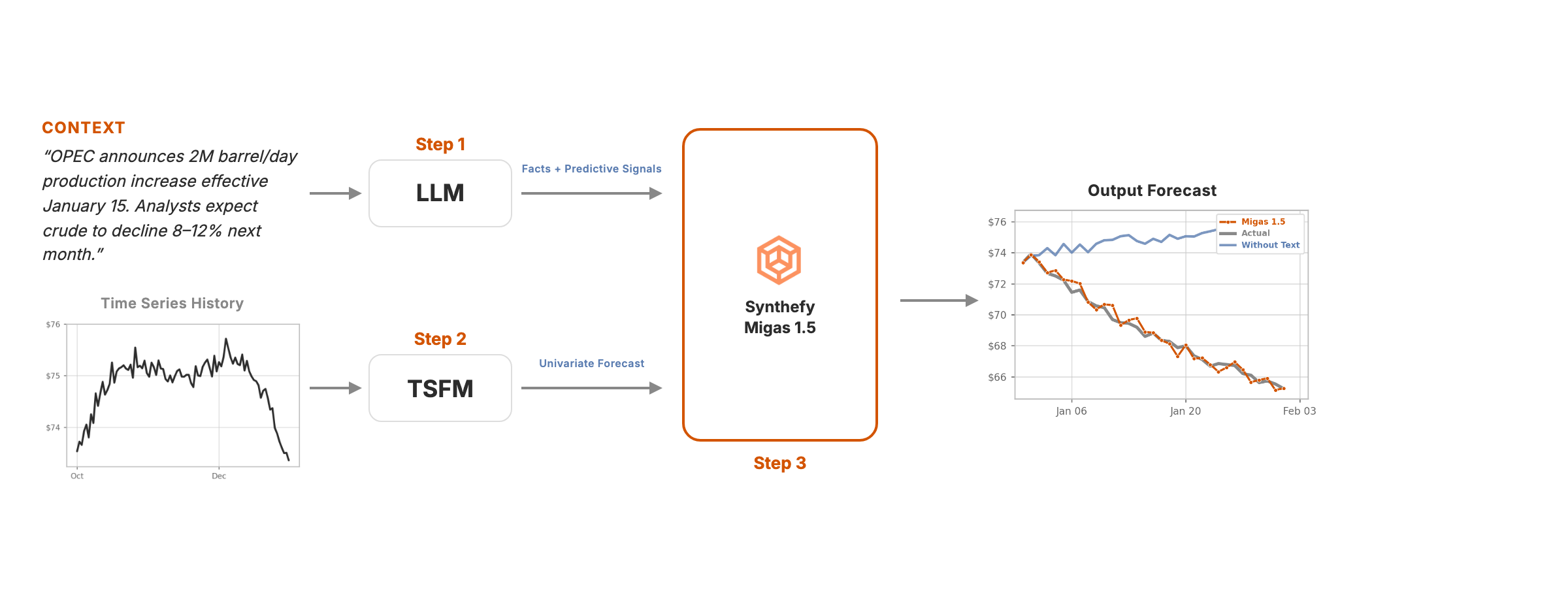

How Migas 1.5 works

Model Elo Ratings

Ranked by pairwise wins across 86 datasets. Migas 1.5 leads all compared approaches.

Elo converts head-to-head forecasting results into one score: models gain rating when they beat another model on a dataset by producing lower Mean MAE. Higher bars mean stronger performance across the full benchmark set.

Win Rate Against Baselines

Migas 1.5 wins on per-dataset Mean MAE more than 75% of the time against every baseline.

Each row is a direct matchup. The orange segment is the share of datasets where Migas 1.5 had lower forecast error; the right segment is where the baseline won.

Performance Across Domains

Lowest mean MAE across stocks (FNSPID), commodities (Suite), and exchange rates (Trading Economics).

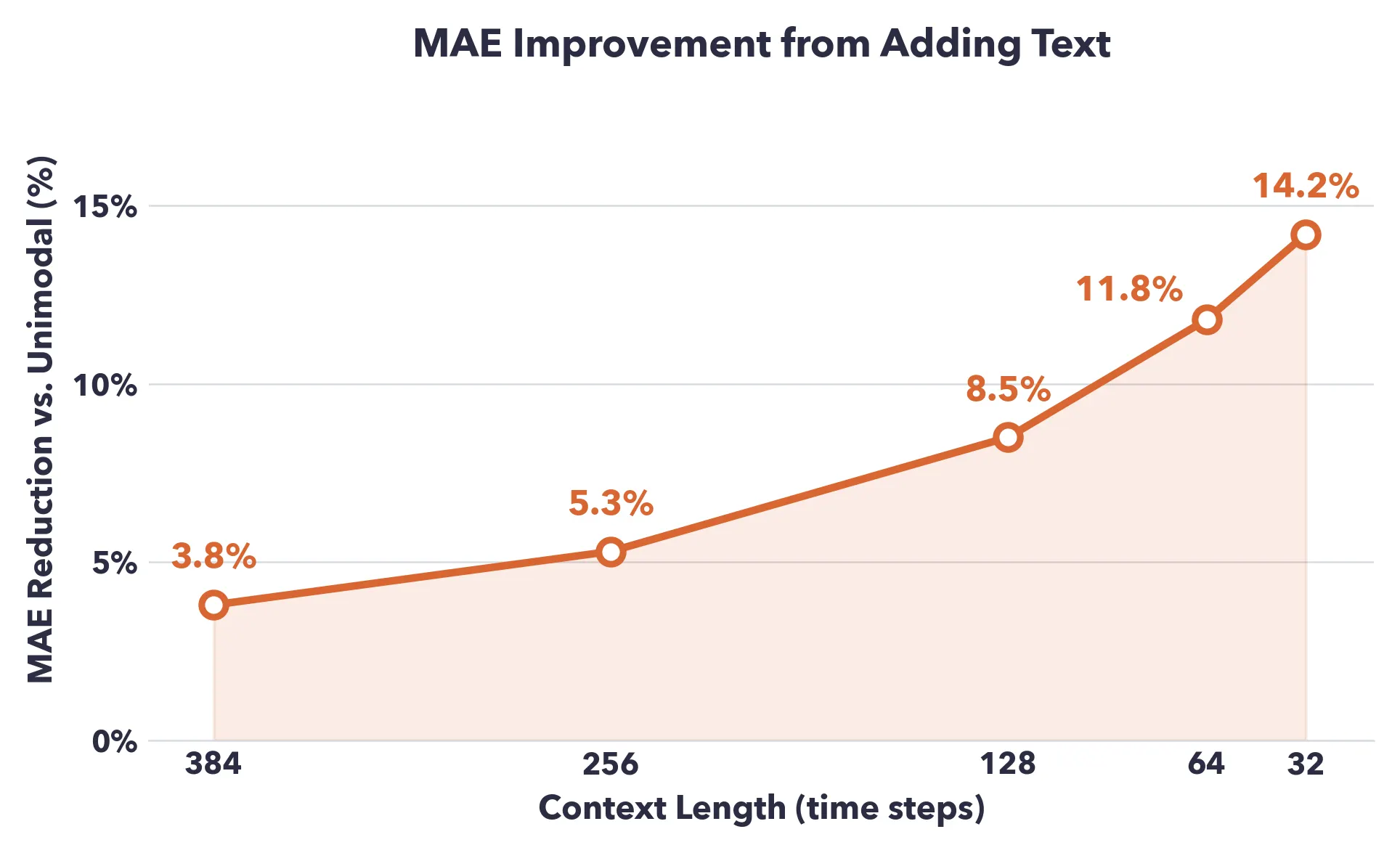

Shines Brightest with Short Context

The shorter the historical record, the larger the gain from text. At 32 steps, MAE drops by 14.2%.

From Migas 1.0 to 1.5

Mixture-of-Experts router over multiple TSFMs. Ranked 2nd on GIFT-Eval for univariate forecasting.

"What combination of models best fits this data?"

Adds textual context. 76.3% win rate over Migas 1.0 — handles scenarios no numerical model can address.

"What does the surrounding context tell us about the future?"